Plugin Playground AI Integration for Faster Plugin Prototyping

Building a JupyterLab plugin usually starts with small experiments – you test your ideas, change a few lines, reload, and repeat. Learn how we integrated AI into the Playground AI

Control

Tech Lowdown with Dillon Roach

I’ll be brief. I value your time. You’re in control.

For those of you following this series, I hope you’ve had the chance to go hands-on with some of the best proprietary models by now. The leading paid models are quite useful at this point and will continue to improve. But, you don’t own them – you rent them as a utility and have limited control over their behavior, availability, and handling of your private data. They’re still great services and I use them myself, but for certain things you need to run on your own hardware. What you’re able to run will naturally depend on what computer resources you have available, but I hope to at least give you some of the tools and language to help get you going.

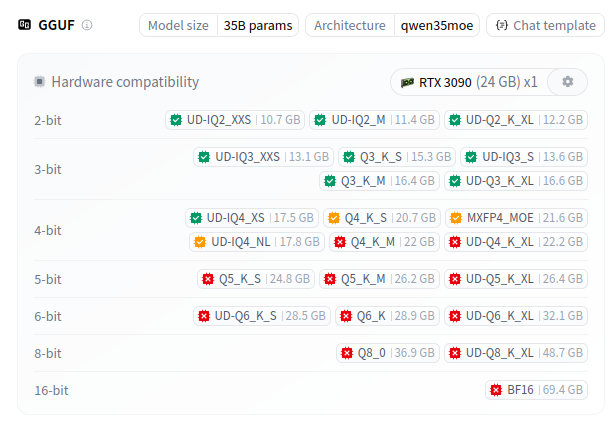

For folks feeling a bit more tech-savvy-adventurous, LibreChat or Open WebUI can serve as frontend to llama-cpp handling the model inference. In this case you’ll need to find your own models and download them yourself; taking https://huggingface.co/unsloth/Qwen3.5-35B-A3B-GGUF as an example – you’ll see in ‘Files and Versions’ that there are multiple versions of the same model saved and available. These are distinct ‘quantizations’ – different compressions of the full precision weights – you only need to download one .gguf file. The naming convention is confusing, but don’t get too caught up at first – you generally want any Q4 or above that will fit on your device. Huggingface has a size rubric for each model on the model card (example below) – anything green or yellow is a good pick, and if there’s nothing 4-bit and above that’s either, it’s likely time to go hunting for a smaller model.

Building a JupyterLab plugin usually starts with small experiments – you test your ideas, change a few lines, reload, and repeat. Learn how we integrated AI into the Playground AI

Share Your Feedback